AudriusButkevicius

Jun 8

The usecase [of glusterfs] was sharing thumbnail cache between multiple ec2 machines in the same az, serving an e-commerce site, before efs was a thing.

The performance of the site tanked really badly due to how long io and discovery took on files in glusterfs, way beyond of what was acceptable.

The cross site (resd eu->us) gluster sync was also a pile of dirt, failing with obscure errors and getting stuck quite often.

This is just first hand experiences and I don’t want to touch it ever again.

Here is another reverse lookups done using dig command:

$ dig -x ip-address-here

$ dig -x 75.126.153.206FreeBSD uses try the drill command:

drill -Qx 54.184.50.208Sometimes you only want to modify files containing specific content. Combine find, grep, and sed:

# Only replace in files that contain the old text

find . -name "*.yaml" -type f -exec grep -l "oldValue" {} \; | xargs sed -i 's/oldValue/newValue/g'awk is used to filter and manipulate output from other programs and functions. awk works on programs that contain rules comprised of patterns and actions. The action awk takes is executed on the text that matches the pattern. Patterns are enclosed in curly braces ({}). Together, a pattern and an action form a rule. The entire awk program is enclosed in single quotes (').

The sed command is a bit like chess: it takes an hour to learn the basics and a lifetime to master them (or, at least a lot of practice). We'll show you a selection of opening gambits in each of the main categories of sed functionality.

sed is a stream editor that works on piped input or files of text. It doesn't have an interactive text editor interface, however. Rather, you provide instructions for it to follow as it works through the text. This all works in Bash and other command-line shells.

his text is a brief description of the features that are present in the Bash shell (version 5.2, 19 September 2022). The Bash home page is http://www.gnu.org/software/bash/.

This is Edition 5.2, last updated 19 September 2022, of The GNU Bash Reference Manual, for Bash, Version 5.2.

Bash contains features that appear in other popular shells, and some features that only appear in Bash. Some of the shells that Bash has borrowed concepts from are the Bourne Shell (sh), the Korn Shell (ksh), and the C-shell (csh and its successor, tcsh). The following menu breaks the features up into categories, noting which features were inspired by other shells and which are specific to Bash.

This manual is meant as a brief introduction to features found in Bash. The Bash manual page should be used as the definitive reference on shell behavior.

us.mirror.ionos.com

powered by IONOS Inc.

Hardware:

2x Intel Xeon Silver 4214R (2.4 GHz, 24 Cores, 48 Threads)

192 GByte RAM

246 TByte storage

20 GBit/sec network connectivity

Located in Karlsruhe / Germany

Software:

This server runs Debian GNU/Linux with:

Nginx

Samba rsync

Welcome to the ZFS Handbook, your definitive guide to mastering the ZFS file system on FreeBSD and Linux. Discover how ZFS can revolutionize your data storage with unmatched reliability, scalability, and advanced features.

Beszel serves as the perfect middle ground between Uptime Kuma and a Grafana + Prometheus setup for my servers. Although it takes a couple of extra commands to deploy Beszel, the app can pull a lot more system metrics than Uptime Kuma. On top of that, it can generate detailed graphics using CPU usage, memory consumption, network bandwidth, system temps, and other historical data, which is far beyond Uptime Kuma’s capabilities. Meanwhile, Beszel is a lot easier to set up than the Grafana and Prometheus combo, as you don’t have to tinker with tons of configuration files and API tokens just to get the monitoring server up and running. //

Beszel does things differently, as it’s compatible with Linux, macOS, and Windows, with the developer planning a potential FreeBSD release in the future. //

Beszel uses a client + server setup for pulling metrics and monitoring your workstation.

NetBSD/i386 is the port of NetBSD to generic machines ("PC clones") with 32-bit x86-family processors. It runs on PCI-Express, PCI, and CardBus systems, as well as older hardware with PCMCIA, VL-bus, EISA, MCA, and ISA (AT-bus) interfaces, with x87 math coprocessors.

Any i486 or better CPU should work - genuine Intel or a compatible such as Cyrix, AMD, or NexGen.

NetBSD/i386 was the original port of NetBSD, and was initially released as NetBSD 0.8 in 1993.

More than 36 years after the release of the 486 and 18 years after Intel stopped making them, leaders of the Linux kernel believe the project can improve itself by leaving i486 support behind. Ingo Molnar, quoting Linus Torvalds regarding "zero real reason for anybody to waste one second" on 486 support, submitted a patch series to the 6.15 kernel that updates its minimum support features. Those requirements now include TSC (Time Stamp Counter) and CX8 (i.e., "fixed" CMPXCH8B, its own whole thing), features that the 486 lacks (as do some early non-Pentium 586 processors).

It's not the first time Torvalds has suggested dropping support for 32-bit processors and relieving kernel developers from implementing archaic emulation and work-around solutions. "We got rid of i386 support back in 2012. Maybe it's time to get rid of i486 support in 2022," Torvalds wrote in October 2022. Failing major changes to the 6.15 kernel, which will likely arrive late this month, i486 support will be dropped.

Where does that leave people running a 486 system for whatever reason? They can run older versions of the Linux kernel and Linux distributions. They might find recommendations for teensy distros like MenuetOS, KolibriOS, and Visopsys, but all three of those require at least a Pentium. They can run FreeDOS. They might get away with the OS/2 descendant ArcaOS. There are some who have modified Windows XP to run on 486 processors, and hopefully, they will not connect those devices to the Internet.

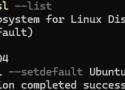

It's really fast and only requires a few lines of shell scripting. You won't need to run systemd inside of WSL 2 either.

This step-by-step guide will help you get started developing with remote containers by setting up Docker Desktop for Windows with WSL 2 (Windows Subsystem for Linux, version 2).

Docker Desktop for Windows provides a development environment for building, shipping, and running dockerized apps. By enabling the WSL 2 based engine, you can run both Linux and Windows containers in Docker Desktop on the same machine.

From my first experience creating a shell script, I learned about the shebang (#!), the special first line used to specify the interpreter for executing the script:

#! /usr/bin/sh

echo "Hello, World!"So that you can just invoke it with ./hello.sh and it will run with the specified interpreter, assuming the file has execute permissions.

Torvalds said in 2007 that he could not remember “exactly when I started git development” but that he probably started at or around April 3rd 2005, that the project was self-hosting from April 7th – the first commit of Git is 1244 lines of commented code and described as “the information manager from hell” – and that the first commit of the Linux kernel was on April 16th. //

Git did what he needed within the first year, said Torvalds, “and when it did what I needed, I lost interest.”

Torvalds described himself as a casual user of Git who mainly uses just five commands: git merge, git blame, git log, git commit and git pull – though he adds later in the interview that he also uses git status “fairly regularly.”

Why did Git succeed? Scott Chacon, co-founder of GitHub and now at startup company GitButler, said that it filled a gap at the time when open source was evolving. SVN, which actually has an easier mental model, is centralized. There was no easy way in 2005 for an open source contributor to submit proposed changes to a code base. Git made it simple to fork the code, make a change, and then send a request to the maintainers that they pull the changed code from the fork to the main branch. Git has a command called request-pull which formats an email for sending to a mailing list with the request included, and this is the origin of the term pull request.

There are two ways to install the Docker containerization platform on Windows 10 and 11. It can be installed as a Docker Desktop for Windows app (uses the built-in Hyper-V + Windows Containers features), or as a full Docker Engine installed in a Linux distro running in the Windows Subsystem for Linux (WSL2). This guide will walk you through the installation and basic configuration of Docker Engine in a WSL environment without using Docker Desktop.

rsync -rin --ignore-existing "$LEFT_DIR"/ "$RIGHT_DIR"/|sed -e 's/^[^ ]* /L /'

rsync -rin --ignore-existing "$RIGHT_DIR"/ "$LEFT_DIR"/|sed -e 's/^[^ ]* /R /'

rsync -rin --existing "$LEFT_DIR"/ "$RIGHT_DIR"/|sed -e 's/^/X /'We've noticed that some of our automatic tests fail when they run at 00:30 but work fine the rest of the day. They fail with the message

gimme gimme gimme

in stderr, which wasn't expected. Why are we getting this output?

Answer:

Dear @colmmacuait, I think that if you type "man" at 0001 hours it should print "gimme gimme gimme". #abba

@marnanel - 3 November 2011

er, that was my fault, I suggested it. Sorry.

Pretty much the whole story is in the commit. The maintainer of man is a good friend of mine, and one day six years ago I jokingly said to him that if you invoke man after midnight it should print "gimme gimme gimme", because of the Abba song called "Gimme gimme gimme a man after midnight":

Well, he did actually put it in. A few people were amused to discover it, and we mostly forgot about it until today.

I can't speak for Col, obviously, but I didn't expect this to ever cause any problems: what sort of test would break on parsing the output of man with no page specified? I suppose I shouldn't be surprised that one turned up eventually, but it did take six years.

(The commit message calls me Thomas, which is my legal first name though I don't use it online much.)

This issue has been fixed with commit 84bde8: Running man with man -w will no longer trigger this easter egg.

When you run a command in bash it will remember the location of that executable so it doesn't have to search the PATH again each time. So if you run the executable, then change the location, bash will still try to use the old location. You should be able to confirm this with hash -t pip3 which will show the old location.

If you run hash -d pip3 it will tell bash to forget the old location and should find the new one next time you try. //

for most bash features it's easier to use help instead of man, so here help hash